# 多分类任务

# 加载 IRIS 训练集和验证集

data.js

/**

* @license

* Copyright 2018 Google LLC. All Rights Reserved.

* Licensed under the Apache License, Version 2.0 (the "License");

* you may not use this file except in compliance with the License.

* You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agreed to in writing, software

* distributed under the License is distributed on an "AS IS" BASIS,

* WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

* See the License for the specific language governing permissions and

* limitations under the License.

* =============================================================================

*/

import * as tf from "@tensorflow/tfjs";

export const IRIS_CLASSES = ["山鸢尾", "变色鸢尾", "维吉尼亚鸢尾"];

export const IRIS_NUM_CLASSES = IRIS_CLASSES.length;

// Iris flowers data. Source:

// https://archive.ics.uci.edu/ml/machine-learning-databases/iris/iris.data

const IRIS_DATA = [

[5.1, 3.5, 1.4, 0.2, 0],

[4.9, 3.0, 1.4, 0.2, 0],

[4.7, 3.2, 1.3, 0.2, 0],

[4.6, 3.1, 1.5, 0.2, 0],

[5.0, 3.6, 1.4, 0.2, 0],

[5.4, 3.9, 1.7, 0.4, 0],

[4.6, 3.4, 1.4, 0.3, 0],

[5.0, 3.4, 1.5, 0.2, 0],

[4.4, 2.9, 1.4, 0.2, 0],

[4.9, 3.1, 1.5, 0.1, 0],

[5.4, 3.7, 1.5, 0.2, 0],

[4.8, 3.4, 1.6, 0.2, 0],

[4.8, 3.0, 1.4, 0.1, 0],

[4.3, 3.0, 1.1, 0.1, 0],

[5.8, 4.0, 1.2, 0.2, 0],

[5.7, 4.4, 1.5, 0.4, 0],

[5.4, 3.9, 1.3, 0.4, 0],

[5.1, 3.5, 1.4, 0.3, 0],

[5.7, 3.8, 1.7, 0.3, 0],

[5.1, 3.8, 1.5, 0.3, 0],

[5.4, 3.4, 1.7, 0.2, 0],

[5.1, 3.7, 1.5, 0.4, 0],

[4.6, 3.6, 1.0, 0.2, 0],

[5.1, 3.3, 1.7, 0.5, 0],

[4.8, 3.4, 1.9, 0.2, 0],

[5.0, 3.0, 1.6, 0.2, 0],

[5.0, 3.4, 1.6, 0.4, 0],

[5.2, 3.5, 1.5, 0.2, 0],

[5.2, 3.4, 1.4, 0.2, 0],

[4.7, 3.2, 1.6, 0.2, 0],

[4.8, 3.1, 1.6, 0.2, 0],

[5.4, 3.4, 1.5, 0.4, 0],

[5.2, 4.1, 1.5, 0.1, 0],

[5.5, 4.2, 1.4, 0.2, 0],

[4.9, 3.1, 1.5, 0.1, 0],

[5.0, 3.2, 1.2, 0.2, 0],

[5.5, 3.5, 1.3, 0.2, 0],

[4.9, 3.1, 1.5, 0.1, 0],

[4.4, 3.0, 1.3, 0.2, 0],

[5.1, 3.4, 1.5, 0.2, 0],

[5.0, 3.5, 1.3, 0.3, 0],

[4.5, 2.3, 1.3, 0.3, 0],

[4.4, 3.2, 1.3, 0.2, 0],

[5.0, 3.5, 1.6, 0.6, 0],

[5.1, 3.8, 1.9, 0.4, 0],

[4.8, 3.0, 1.4, 0.3, 0],

[5.1, 3.8, 1.6, 0.2, 0],

[4.6, 3.2, 1.4, 0.2, 0],

[5.3, 3.7, 1.5, 0.2, 0],

[5.0, 3.3, 1.4, 0.2, 0],

[7.0, 3.2, 4.7, 1.4, 1],

[6.4, 3.2, 4.5, 1.5, 1],

[6.9, 3.1, 4.9, 1.5, 1],

[5.5, 2.3, 4.0, 1.3, 1],

[6.5, 2.8, 4.6, 1.5, 1],

[5.7, 2.8, 4.5, 1.3, 1],

[6.3, 3.3, 4.7, 1.6, 1],

[4.9, 2.4, 3.3, 1.0, 1],

[6.6, 2.9, 4.6, 1.3, 1],

[5.2, 2.7, 3.9, 1.4, 1],

[5.0, 2.0, 3.5, 1.0, 1],

[5.9, 3.0, 4.2, 1.5, 1],

[6.0, 2.2, 4.0, 1.0, 1],

[6.1, 2.9, 4.7, 1.4, 1],

[5.6, 2.9, 3.6, 1.3, 1],

[6.7, 3.1, 4.4, 1.4, 1],

[5.6, 3.0, 4.5, 1.5, 1],

[5.8, 2.7, 4.1, 1.0, 1],

[6.2, 2.2, 4.5, 1.5, 1],

[5.6, 2.5, 3.9, 1.1, 1],

[5.9, 3.2, 4.8, 1.8, 1],

[6.1, 2.8, 4.0, 1.3, 1],

[6.3, 2.5, 4.9, 1.5, 1],

[6.1, 2.8, 4.7, 1.2, 1],

[6.4, 2.9, 4.3, 1.3, 1],

[6.6, 3.0, 4.4, 1.4, 1],

[6.8, 2.8, 4.8, 1.4, 1],

[6.7, 3.0, 5.0, 1.7, 1],

[6.0, 2.9, 4.5, 1.5, 1],

[5.7, 2.6, 3.5, 1.0, 1],

[5.5, 2.4, 3.8, 1.1, 1],

[5.5, 2.4, 3.7, 1.0, 1],

[5.8, 2.7, 3.9, 1.2, 1],

[6.0, 2.7, 5.1, 1.6, 1],

[5.4, 3.0, 4.5, 1.5, 1],

[6.0, 3.4, 4.5, 1.6, 1],

[6.7, 3.1, 4.7, 1.5, 1],

[6.3, 2.3, 4.4, 1.3, 1],

[5.6, 3.0, 4.1, 1.3, 1],

[5.5, 2.5, 4.0, 1.3, 1],

[5.5, 2.6, 4.4, 1.2, 1],

[6.1, 3.0, 4.6, 1.4, 1],

[5.8, 2.6, 4.0, 1.2, 1],

[5.0, 2.3, 3.3, 1.0, 1],

[5.6, 2.7, 4.2, 1.3, 1],

[5.7, 3.0, 4.2, 1.2, 1],

[5.7, 2.9, 4.2, 1.3, 1],

[6.2, 2.9, 4.3, 1.3, 1],

[5.1, 2.5, 3.0, 1.1, 1],

[5.7, 2.8, 4.1, 1.3, 1],

[6.3, 3.3, 6.0, 2.5, 2],

[5.8, 2.7, 5.1, 1.9, 2],

[7.1, 3.0, 5.9, 2.1, 2],

[6.3, 2.9, 5.6, 1.8, 2],

[6.5, 3.0, 5.8, 2.2, 2],

[7.6, 3.0, 6.6, 2.1, 2],

[4.9, 2.5, 4.5, 1.7, 2],

[7.3, 2.9, 6.3, 1.8, 2],

[6.7, 2.5, 5.8, 1.8, 2],

[7.2, 3.6, 6.1, 2.5, 2],

[6.5, 3.2, 5.1, 2.0, 2],

[6.4, 2.7, 5.3, 1.9, 2],

[6.8, 3.0, 5.5, 2.1, 2],

[5.7, 2.5, 5.0, 2.0, 2],

[5.8, 2.8, 5.1, 2.4, 2],

[6.4, 3.2, 5.3, 2.3, 2],

[6.5, 3.0, 5.5, 1.8, 2],

[7.7, 3.8, 6.7, 2.2, 2],

[7.7, 2.6, 6.9, 2.3, 2],

[6.0, 2.2, 5.0, 1.5, 2],

[6.9, 3.2, 5.7, 2.3, 2],

[5.6, 2.8, 4.9, 2.0, 2],

[7.7, 2.8, 6.7, 2.0, 2],

[6.3, 2.7, 4.9, 1.8, 2],

[6.7, 3.3, 5.7, 2.1, 2],

[7.2, 3.2, 6.0, 1.8, 2],

[6.2, 2.8, 4.8, 1.8, 2],

[6.1, 3.0, 4.9, 1.8, 2],

[6.4, 2.8, 5.6, 2.1, 2],

[7.2, 3.0, 5.8, 1.6, 2],

[7.4, 2.8, 6.1, 1.9, 2],

[7.9, 3.8, 6.4, 2.0, 2],

[6.4, 2.8, 5.6, 2.2, 2],

[6.3, 2.8, 5.1, 1.5, 2],

[6.1, 2.6, 5.6, 1.4, 2],

[7.7, 3.0, 6.1, 2.3, 2],

[6.3, 3.4, 5.6, 2.4, 2],

[6.4, 3.1, 5.5, 1.8, 2],

[6.0, 3.0, 4.8, 1.8, 2],

[6.9, 3.1, 5.4, 2.1, 2],

[6.7, 3.1, 5.6, 2.4, 2],

[6.9, 3.1, 5.1, 2.3, 2],

[5.8, 2.7, 5.1, 1.9, 2],

[6.8, 3.2, 5.9, 2.3, 2],

[6.7, 3.3, 5.7, 2.5, 2],

[6.7, 3.0, 5.2, 2.3, 2],

[6.3, 2.5, 5.0, 1.9, 2],

[6.5, 3.0, 5.2, 2.0, 2],

[6.2, 3.4, 5.4, 2.3, 2],

[5.9, 3.0, 5.1, 1.8, 2]

];

/**

* Convert Iris data arrays to `tf.Tensor`s.

*

* @param data The Iris input feature data, an `Array` of `Array`s, each element

* of which is assumed to be a length-4 `Array` (for petal length, petal

* width, sepal length, sepal width).

* @param targets An `Array` of numbers, with values from the set {0, 1, 2}:

* representing the true category of the Iris flower. Assumed to have the same

* array length as `data`.

* @param testSplit Fraction of the data at the end to split as test data: a

* number between 0 and 1.

* @return A length-4 `Array`, with

* - training data as `tf.Tensor` of shape [numTrainExapmles, 4].

* - training one-hot labels as a `tf.Tensor` of shape [numTrainExamples, 3]

* - test data as `tf.Tensor` of shape [numTestExamples, 4].

* - test one-hot labels as a `tf.Tensor` of shape [numTestExamples, 3]

*/

function convertToTensors(data, targets, testSplit) {

const numExamples = data.length;

if (numExamples !== targets.length) {

throw new Error("data and split have different numbers of examples");

}

// Randomly shuffle `data` and `targets`.

const indices = [];

for (let i = 0; i < numExamples; ++i) {

indices.push(i);

}

tf.util.shuffle(indices);

const shuffledData = [];

const shuffledTargets = [];

for (let i = 0; i < numExamples; ++i) {

shuffledData.push(data[indices[i]]);

shuffledTargets.push(targets[indices[i]]);

}

// Split the data into a training set and a tet set, based on `testSplit`.

const numTestExamples = Math.round(numExamples * testSplit);

const numTrainExamples = numExamples - numTestExamples;

const xDims = shuffledData[0].length;

// Create a 2D `tf.Tensor` to hold the feature data.

const xs = tf.tensor2d(shuffledData, [numExamples, xDims]);

// Create a 1D `tf.Tensor` to hold the labels, and convert the number label

// from the set {0, 1, 2} into one-hot encoding (.e.g., 0 --> [1, 0, 0]).

const ys = tf.oneHot(tf.tensor1d(shuffledTargets).toInt(), IRIS_NUM_CLASSES);

// Split the data into training and test sets, using `slice`.

const xTrain = xs.slice([0, 0], [numTrainExamples, xDims]);

const xTest = xs.slice([numTrainExamples, 0], [numTestExamples, xDims]);

const yTrain = ys.slice([0, 0], [numTrainExamples, IRIS_NUM_CLASSES]);

const yTest = ys.slice([0, 0], [numTestExamples, IRIS_NUM_CLASSES]);

return [xTrain, yTrain, xTest, yTest];

}

/**

* Obtains Iris data, split into training and test sets.

*

* @param testSplit Fraction of the data at the end to split as test data: a

* number between 0 and 1.

*

* @param return A length-4 `Array`, with

* - training data as an `Array` of length-4 `Array` of numbers.

* - training labels as an `Array` of numbers, with the same length as the

* return training data above. Each element of the `Array` is from the set

* {0, 1, 2}.

* - test data as an `Array` of length-4 `Array` of numbers.

* - test labels as an `Array` of numbers, with the same length as the

* return test data above. Each element of the `Array` is from the set

* {0, 1, 2}.

*/

export function getIrisData(testSplit) {

return tf.tidy(() => {

const dataByClass = [];

const targetsByClass = [];

for (let i = 0; i < IRIS_CLASSES.length; ++i) {

dataByClass.push([]);

targetsByClass.push([]);

}

for (const example of IRIS_DATA) {

const target = example[example.length - 1];

const data = example.slice(0, example.length - 1);

dataByClass[target].push(data);

targetsByClass[target].push(target);

}

const xTrains = [];

const yTrains = [];

const xTests = [];

const yTests = [];

for (let i = 0; i < IRIS_CLASSES.length; ++i) {

const [xTrain, yTrain, xTest, yTest] = convertToTensors(

dataByClass[i],

targetsByClass[i],

testSplit

);

xTrains.push(xTrain);

yTrains.push(yTrain);

xTests.push(xTest);

yTests.push(yTest);

}

const concatAxis = 0;

return [

tf.concat(xTrains, concatAxis),

tf.concat(yTrains, concatAxis),

tf.concat(xTests, concatAxis),

tf.concat(yTests, concatAxis)

];

});

}

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

146

147

148

149

150

151

152

153

154

155

156

157

158

159

160

161

162

163

164

165

166

167

168

169

170

171

172

173

174

175

176

177

178

179

180

181

182

183

184

185

186

187

188

189

190

191

192

193

194

195

196

197

198

199

200

201

202

203

204

205

206

207

208

209

210

211

212

213

214

215

216

217

218

219

220

221

222

223

224

225

226

227

228

229

230

231

232

233

234

235

236

237

238

239

240

241

242

243

244

245

246

247

248

249

250

251

252

253

254

255

256

257

258

259

260

261

262

263

264

265

266

267

268

269

270

271

272

273

274

275

276

277

278

279

280

281

282

283

284

285

286

287

288

289

290

291

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

146

147

148

149

150

151

152

153

154

155

156

157

158

159

160

161

162

163

164

165

166

167

168

169

170

171

172

173

174

175

176

177

178

179

180

181

182

183

184

185

186

187

188

189

190

191

192

193

194

195

196

197

198

199

200

201

202

203

204

205

206

207

208

209

210

211

212

213

214

215

216

217

218

219

220

221

222

223

224

225

226

227

228

229

230

231

232

233

234

235

236

237

238

239

240

241

242

243

244

245

246

247

248

249

250

251

252

253

254

255

256

257

258

259

260

261

262

263

264

265

266

267

268

269

270

271

272

273

274

275

276

277

278

279

280

281

282

283

284

285

286

287

288

289

290

291

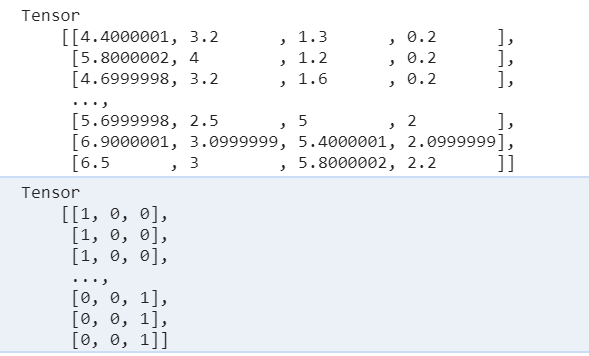

import { getIrisData, IRIS_CLASSES } from "./data";

window.onload = () => {

// 按顺序获取 训练集数据 Train 验证集Test getIrisDate()的参数是验证集的比例 有15%用于验证集

const [xTrain, yTrain, xTest, yTest] = getIrisData(0.15);

xTrain.print();

yTrain.print();

};

1

2

3

4

5

6

7

8

2

3

4

5

6

7

8

- 训练集和验证集的数据结构是一样的,都是从一个集合中取得

- x 数据对应的是 4 个特征值

- y 数据对应的则是类别,也是通过二维数组和 x 对应上

# 定义模型结构:带有 softmax 的多层神经网络

softmax 激活函数可以算出每一个分类的概率

import * as tf from "@tensorflow/tfjs";

import { getIrisData, IRIS_CLASSES } from "./data";

import { mod } from "@tensorflow/tfjs";

window.onload = () => {

// 按顺序获取 训练集数据 Train 验证集Test getIrisDate()的参数是验证集的比例 有15%用于验证集

const [xTrain, yTrain, xTest, yTest] = getIrisData(0.15);

const model = tf.sequential();

// 添加中间层,凭感觉设置十个神经元

model.add(

tf.layers.dense({

units: 10,

inputShape: [xTrain.shape[1]],

activation: "sigmoid"

})

);

// 多分类神经网络的核心,输出是三个神经元,使用softmax激活函数可以分别算出每个类别的概率

model.add(

tf.layers.dense({

units: 3,

activation: "softmax"

})

);

};

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

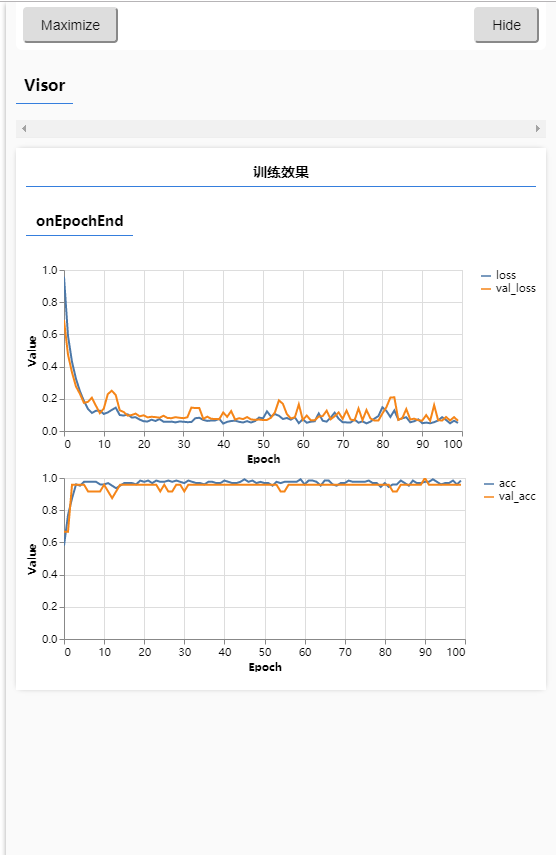

# 训练模型:交叉熵损失函数与准确度度量

import * as tf from "@tensorflow/tfjs";

import * as tfvis from "@tensorflow/tfjs-vis";

import { getIrisData, IRIS_CLASSES } from "./data";

window.onload = async () => {

// 按顺序获取 训练集数据 Train 验证集Test getIrisDate()的参数是验证集的比例 有15%用于验证集

const [xTrain, yTrain, xTest, yTest] = getIrisData(0.15);

const model = tf.sequential();

// 添加中间层,凭感觉设置十个神经元

model.add(

tf.layers.dense({

units: 10,

inputShape: [xTrain.shape[1]],

activation: "sigmoid"

})

);

// 多分类神经网络的核心,输出是三个神经元,使用softmax激活函数可以分别算出每个类别的概率

model.add(

tf.layers.dense({

units: 3,

activation: "softmax"

})

);

// 设置损失函数、优化器、及准确度

model.compile({

// 交叉熵

loss: "categoricalCrossentropy",

optimizer: tf.train.adam(0.1),

// 准确度

metrics: ["accuracy"]

});

// 训练模型

await model.fit(xTrain, yTrain, {

epochs: 100,

// 添加验证集

validationData: [xTest, yTest],

callbacks: tfvis.show.fitCallbacks(

{ name: "训练效果" },

// 显示训练过程的损失,验证集误差,准确度,验证集准确度

["loss", "val_loss", "acc", "val_acc"],

{ callbacks: ["onEpochEnd"] }

)

});

};

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

- 可以看到蓝线代表训练集,黄线代表验证集,损失和准确度都交织在一起说明相差不多,我们的训练方向是正确的

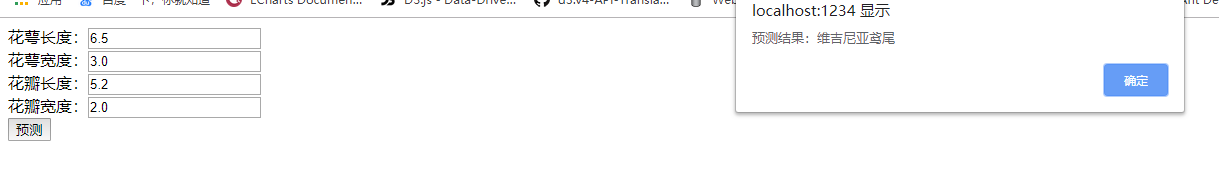

# 预测

<script src="./script.js"></script>

<form action="" onsubmit="predict(this);return false;">

花萼长度:<input type="text" name="a" /><br />

花萼宽度:<input type="text" name="b" /><br />

花瓣长度:<input type="text" name="c" /><br />

花瓣宽度:<input type="text" name="d" /><br />

<button type="submit">预测</button>

</form>

1

2

3

4

5

6

7

8

9

2

3

4

5

6

7

8

9

import * as tf from "@tensorflow/tfjs";

import * as tfvis from "@tensorflow/tfjs-vis";

import { getIrisData, IRIS_CLASSES } from "./data";

window.onload = async () => {

// 按顺序获取 训练集数据 Train 验证集Test getIrisDate()的参数是验证集的比例 有15%用于验证集

const [xTrain, yTrain, xTest, yTest] = getIrisData(0.15);

const model = tf.sequential();

// 添加中间层,凭感觉设置十个神经元

model.add(

tf.layers.dense({

units: 10,

inputShape: [xTrain.shape[1]],

activation: "sigmoid"

})

);

// 多分类神经网络的核心,输出是三个神经元,使用softmax激活函数可以分别算出每个类别的概率

model.add(

tf.layers.dense({

units: 3,

activation: "softmax"

})

);

// 设置损失函数、优化器、及准确度

model.compile({

// 交叉熵

loss: "categoricalCrossentropy",

optimizer: tf.train.adam(0.1),

// 准确度

metrics: ["accuracy"]

});

await model.fit(xTrain, yTrain, {

epochs: 100,

// 添加验证集

validationData: [xTest, yTest],

callbacks: tfvis.show.fitCallbacks(

{ name: "训练效果" },

// 显示训练过程的损失,验证集误差,准确度,验证集准确度

["loss", "val_loss", "acc", "val_acc"],

{ callbacks: ["onEpochEnd"] }

)

});

window.predict = form => {

const input = tf.tensor([

[form.a.value * 1, form.b.value * 1, form.c.value * 1, form.d.value * 1]

]);

const pred = model.predict(input);

// 在上面我们看到y的值是一个二维数组 [[0, 0, 1], [1, 0, 0]] 这样的数据结构

// pred.argMax(1) 输出第1维也就是 [0,0,1] 的最大值 1 的坐标,正好对应着 IRIS_CLASSES数组的坐标

alert(`预测结果:${IRIS_CLASSES[pred.argMax(1).dataSync(0)]}`);

};

};

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55